1. Introduction: The Great Data Traffic Jam in the Sky

In the “New Space” era, we are facing an architectural impasse. While modern constellations of Low Earth Orbit (LEO) satellites are capable of generating a staggering volume of high-resolution Earth imagery and scientific data, the “space-ground network link” remains a massive bottleneck. We are essentially trying to pipe a flood of information through a straw. Much of this data—images of empty seas or cloud cover—is effectively “noise” that consumes precious bandwidth.

The solution is “In-Space Computing”: processing data directly in orbit so we only transmit the critical, high-value portions back to Earth. However, there is a baffling lag in our orbital infrastructure. The flagship Mars 2020 mission, for instance, relied on the BAE RAD750—a processor originally released in 2001 that operates at 300 million instructions per second (MIPS). For context, the mobile chip in your pocket is orders of magnitude faster. To build the “Space Cloud,” we must ask why we are still launching 20-year-old silicon when the future of orbital computing looks less like a supercomputer and more like a smartphone.

2. The Efficiency Paradox: Why “Throughput per Energy” (TpE) is the Only Metric That Matters

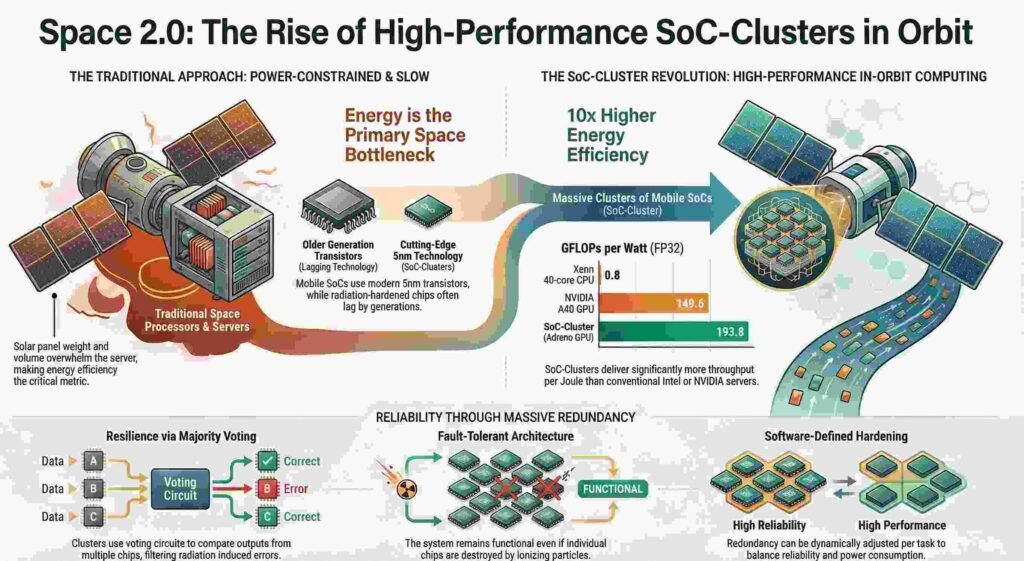

In the aerospace world, we live and die by SWaP (Size, Weight, and Power). For a systems architect, designing for space requires a “Three Metric Reduction.” At launch, we care about Throughput per Weight (TpW) and Throughput per Volume (TpV). But once the server is deployed, the physics of orbital operations dictates that energy efficiency—Throughput per Energy (TpE)—becomes the primary constraint.

This leads to the “solar panel problem,” a counter-intuitive reality of satellite design. To power a power-hungry, monolithic server, you need massive solar panels. The weight of these panels scales directly with power consumption; in many cases, the panels required to keep a server running will actually overwhelm the server itself in both weight and volume. As an architect, I look at it this way: saving just one watt of power often saves more weight in solar panels than the total mass of the server hardware itself. In orbit, efficiency isn’t just about “going green”; it’s a fundamental requirement of launch physics.

3. The Rise of the “SoC-Cluster”: Massive Tiny Chips vs. Monolithic Giants

The most effective way to optimize TpE is to look toward the mobile industry. We are seeing a shift away from monolithic giants like Intel Xeon CPUs or high-end NVIDIA GPUs in favor of the “SoC-Cluster”—a pool of low-power System-on-Chips like the Qualcomm Snapdragon or Apple Silicon.

The technology gap is stark: while traditional space-grade processors lag behind, a mobile chip like the Snapdragon 888 is built on a 5nm process, whereas many server-grade chips remain at 10nm or higher. These chips are “born” for the constraints of a satellite because they were perfected for the battery-limited world of mobile phones and cloud gaming. This isn’t just theory; the Multiview Onboard Computational Imager (MOCI) mission at the University of Georgia utilized an NVIDIA Jetson TX2 to perform onboard computations specifically to mitigate high down-link rates.

Furthermore, SoC-Clusters are already proven on Earth; Alibaba’s Edge Node Service (ENS) uses them to power cloud gaming for low-battery smartphones. By leveraging dedicated Digital Signal Processor (DSP) and MediaCodec engines rather than general-purpose cores, these clusters can be 9.3–15.5x more energy-efficient than traditional servers at video transcoding.

4. Democracy in Orbit: Using “Majority Voting” to Defeat Radiation

Space is a lethal environment for silicon. Galactic Cosmic Rays (GCRs), solar particles, and the Van Allen belts can cause “Single-Event Upsets” (SEUs)—bit flips that corrupt data. Traditionally, we solved this with expensive hardware hardening. But a more resilient, architectural strategy is “N-modular redundancy,” or “majority voting.”

Instead of one single hardened processor, we use a decentralized topology. Imagine a server organized into 12 PCBs, with each board hosting 5 mobile SoCs—a total of 60 chips. A voting circuit takes the output from these chips and only accepts the majority result. This “Democracy in Orbit” means that if an ionizing particle flips a bit or even destroys a specific chip, the remaining 59 nodes simply outvote the error. This makes the system far more robust than a monolithic server; one “dead” chip doesn’t kill the mission, it just slightly reduces the pool of voters.

5. From Weeks to Hours: The Milestone of Real-Time Results on the ISS

The transition from downlinking raw data to processing at the edge is already delivering results. Historically, raw data from experiments had to be transmitted to ground-based data centers, a process that could take weeks. The HPE Spaceborne Computer-2 (SBC-2) on the International Space Station recently broke this cycle.

In a landmark NASA experiment using the MinION DNA sequencer, data that was once computationally overwhelming was fed into the SBC-2. Using a custom edge solution, the analysis was completed in just eight hours while the experiment was still on the station.

“The most important thing is the speed to get to the results, and that is what edge computing is all about. The goal is that wherever the data is being produced, you get the results right there.” — Naeem Altaf, IBM Distinguished Engineer and CTO for Space Technology.

6. Space 2.0: Infrastructure as a Service (IaaS) Above the Clouds

We are moving away from “bespoke” satellites toward mass-produced commercial infrastructure. This is the era of “Space 2.0,” where orbital assets are becoming extensions of the terrestrial cloud. Major providers like AWS and Azure are enabling a model of “Space Infrastructure as a Service” (IaaS).

With services like AWS Ground Station, developers can now “rent antenna time” by the minute, treating a satellite pass as just another API call. This eliminates the need to build a proprietary ground station, which can cost between $1 million and $5 million. This shifts the economics of space from high-capital expenditure to a fully variable cost model, lowering the barrier to entry for the next generation of orbital startups.

7. Conclusion: A Planet Interconnected from the Outside In

The emergence of Space Cloud Computing and distributed nodes is turning our orbit into a high-performance extension of our digital world. This infrastructure enables true autonomy: AI-driven collision avoidance in crowded LEO shells and real-time health monitoring for astronauts on deep-space missions to Mars.

As we look ahead, the “literal Cloud” offers a compelling environmental argument. Because satellites operate without the interference of Earth’s atmosphere, they have a much higher solar-to-electricity conversion ratio. We are looking at the potential for zero-carbon data centers powered by pure, unfiltered solar energy. If the most efficient way to process the world’s data is to move the hardware off-planet, how soon before our digital infrastructure is no longer just a metaphor, but a literal description of its location?